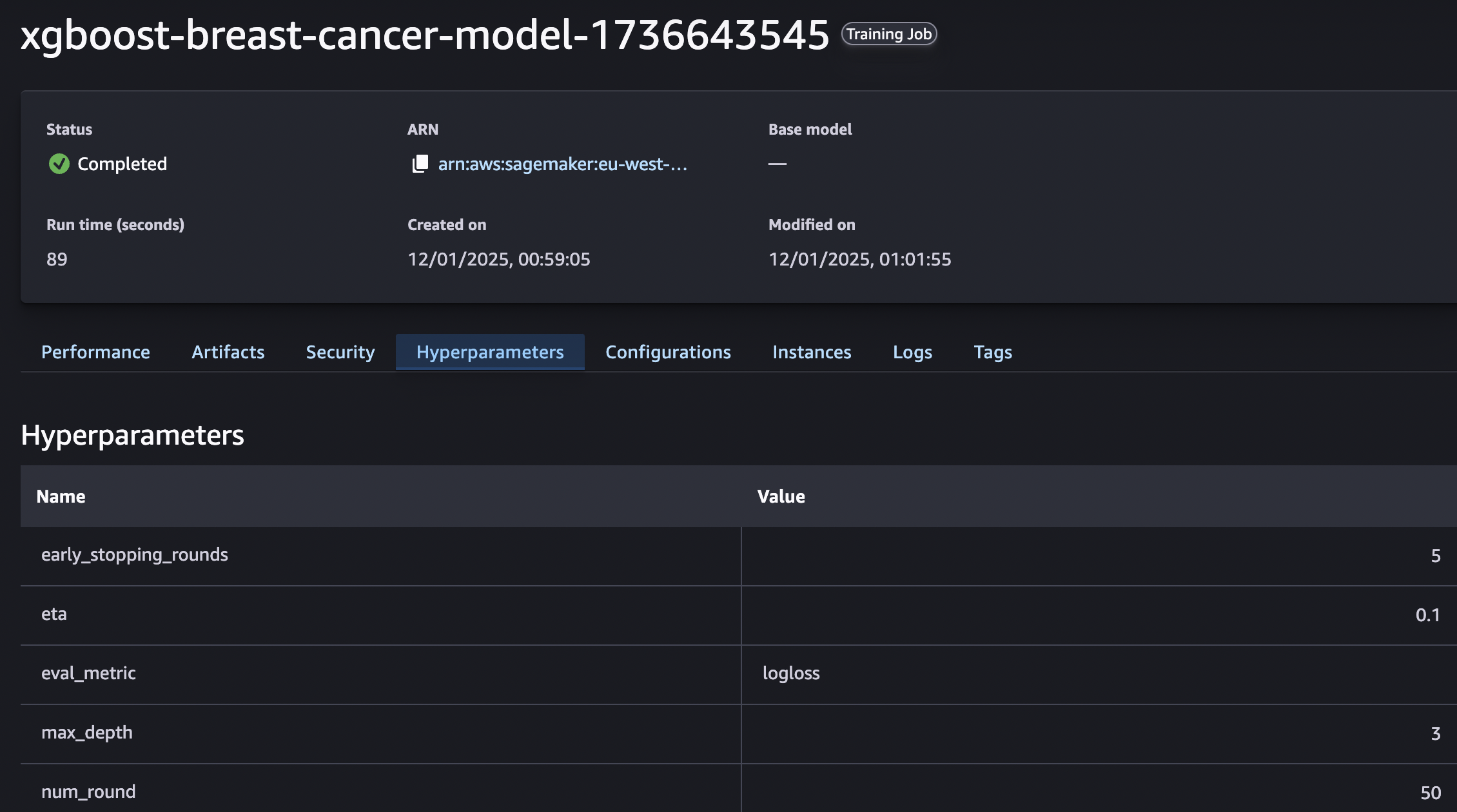

Hyperparameter tuning is the process of optimizing the configuration parameters that govern how a machine learning model learns from data. Unlike model parameters (which are learned during training), hyperparameters are preset before training begins and significantly impact a model’s performance. Fine-tuning these settings ensures the model achieves the best balance between accuracy and efficiency, making hyperparameter tuning a critical step in building robust machine learning solutions.

Hyperparameter tuning transforms a good model into a great one. By optimizing the configuration of a machine learning model, this process ensures:

Incorporating hyperparameter tuning into your ML workflow ensures that your models are not only high-performing but also cost-effective and scalable, making them ideal for real-world applications.

Streamline ML model performance with optimized hyperparameters. Reduce costs and training times by automating the search for the best configurations using AWS SageMaker. Say goodbye to manual trial and error and hello to efficient resource utilization.

Boost model accuracy without the hassle. With CloudStartupTech, leverage AWS SageMaker’s distributed infrastructure to efficiently scale hyperparameter tuning while minimizing computational overhead.

Optimize your ML development costs. Our automated hyperparameter tuning ensures peak performance while cutting down time and cloud expenses, so you can focus on results instead of resource management.

Achieve scalability without compromise. Whether small datasets or large-scale models, AWS SageMaker’s tools adapt seamlessly, making hyperparameter tuning cost-efficient and time-saving.

Maximize your cloud resources. Automated processes ensure the best configurations for your ML model are discovered, reducing waste and boosting output quality.

Enjoy a clear, reproducible tuning process. All results and metrics are securely logged in S3, providing insights that enhance performance while ensuring transparency for future refinements.

© Copyright Cloudstartuptech, all rights reserved.